Tech

Telemetryczny Systems for Smart Monitoring Today

Telemetry is quietly shaping the way modern systems communicate, monitor, and respond to real-world conditions. A telemetryczny approach allows devices, machines, and infrastructure to send performance data from remote locations to a central system for analysis. From industrial equipment to environmental sensors, this technology helps organizations understand what is happening in real time. Instead of waiting for failures, engineers can observe trends, predict issues, and respond quickly. As industries grow more connected, remote monitoring tools have become essential for improving efficiency, safety, and decision making.

Understanding Remote Monitoring Technology

Remote monitoring technology allows systems to collect operational information without requiring a person to be physically present. Sensors measure variables such as temperature, pressure, location, and movement, then transmit that information to a control center. Engineers can observe system performance through dashboards or alerts. This approach saves time and resources because technicians do not need to inspect every component manually.

Many industries depend on accurate sensor data to maintain reliability. Power plants track turbine performance, logistics companies monitor vehicle locations, and healthcare providers observe patient vitals through connected devices. Each example demonstrates how remote data collection supports faster decision making. When information travels instantly, teams can react to small changes before they become large operational problems.

The rise of cloud computing has strengthened this technology even further. Data can now be processed, visualized, and stored on scalable platforms accessible from anywhere. Businesses no longer rely only on local servers or isolated networks. Instead, integrated systems combine sensors, communication protocols, and software analytics to create a connected environment that supports real-time operational awareness.

Will You Check This Article: rblwal: Meaning, Uses, and Why the Term Is Emerging

How Telemetryczny Data Transmission Works

A telemetryczny system typically begins with sensors that gather measurements from equipment or environmental conditions. These sensors convert physical signals into digital information that can be transmitted electronically. Communication modules then send the data through wireless networks, satellites, or internet connections. The information arrives at centralized software that interprets and stores the readings.

Once the information reaches a monitoring platform, analytical tools begin processing it. Software algorithms compare incoming measurements with expected performance ranges. If unusual patterns appear, alerts notify engineers or operators. This immediate feedback loop allows teams to investigate potential issues quickly, preventing costly downtime or system failures that might otherwise go unnoticed.

Data visualization tools make the process easier to understand. Instead of reviewing raw numbers, operators see charts, dashboards, and real-time graphs. These visuals highlight trends that may develop gradually over days or weeks. When engineers can recognize patterns early, they gain valuable insight into maintenance needs and system performance across multiple operational environments.

Key Components of Telemetry Systems

Every monitoring network relies on several essential components working together smoothly. Sensors serve as the foundation because they capture the physical measurements that systems need to analyze. Communication hardware follows closely behind. It ensures information travels reliably between remote equipment and the central monitoring platform, even across long distances.

Data processing platforms transform raw information into meaningful insights. These platforms may operate on cloud servers, private networks, or hybrid infrastructure. Engineers use software dashboards to track system health, compare historical performance, and identify anomalies. Reliable processing systems also archive information so organizations can analyze trends over months or years.

Another important element involves system integration. Monitoring tools must connect with existing operational software such as maintenance systems, control interfaces, or enterprise resource platforms. When data flows smoothly between systems, organizations gain a broader understanding of operations. Integration helps teams move from simple monitoring toward predictive management and smarter automation.

Benefits for Industry and Infrastructure

Industries that rely on complex equipment gain significant advantages from advanced monitoring solutions. Continuous data collection helps engineers identify subtle performance changes long before visible problems appear. When organizations understand how systems behave under normal conditions, they can detect irregular patterns immediately and schedule maintenance before costly failures occur.

Operational efficiency also improves because teams can monitor many assets simultaneously. Instead of inspecting each machine manually, operators review centralized dashboards that display system status across entire facilities or networks. This capability becomes especially valuable in large environments such as manufacturing plants, power grids, or transportation systems where thousands of components operate continuously.

Safety represents another major benefit. Real-time monitoring allows organizations to detect dangerous conditions early. Temperature spikes, pressure changes, or unusual vibrations often signal potential hazards. When alerts reach technicians quickly, they can respond before conditions escalate. This proactive approach protects workers, equipment, and surrounding communities from preventable risks.

Real-World Applications Across Sectors

Modern monitoring technology appears in more industries than many people realize. Transportation companies use it to track vehicle performance and fuel efficiency across large fleets. Sensors installed in trucks or trains transmit operational information continuously, allowing logistics managers to optimize routes, schedule maintenance, and improve overall fleet performance.

Energy companies rely heavily on remote monitoring for pipelines, wind turbines, and power distribution systems. Equipment often operates in remote or harsh environments where manual inspection is difficult. Continuous data transmission allows engineers to observe performance conditions without sending technicians to every location, reducing both costs and operational risk.

Environmental research organizations also benefit from these systems. Remote sensors measure rainfall, water levels, air quality, and wildlife activity across large geographic areas. Scientists collect consistent data without disturbing natural habitats. Over time, these measurements reveal environmental patterns that support better planning, conservation strategies, and climate research.

Challenges in Telemetry Implementation

Despite its advantages, implementing advanced monitoring infrastructure requires careful planning. Organizations must design systems that handle large volumes of data without overwhelming network resources. As sensor networks expand, maintaining stable connections and secure data transmission becomes increasingly important for operational reliability.

Data management presents another challenge. Continuous monitoring generates massive streams of information, and not all of it holds equal value. Engineers must decide which measurements require immediate attention and which can be stored for long-term analysis. Effective filtering and processing techniques prevent information overload while preserving useful operational insights.

Security concerns also deserve serious attention. When devices transmit data across networks, they can become potential entry points for cyber threats. Companies must implement encryption, authentication systems, and secure communication protocols to protect sensitive operational information. Strong cybersecurity practices ensure monitoring systems remain reliable and trustworthy over time.

Future Trends in Monitoring Technology

The future of monitoring technology continues to evolve alongside advances in artificial intelligence and connected devices. Machine learning systems now analyze large datasets to identify patterns humans might miss. These algorithms can predict equipment failures days or weeks before they occur, helping organizations shift from reactive repairs toward predictive maintenance strategies.

Edge computing is another development gaining attention. Instead of sending all information to distant servers, processing can occur directly near the sensor location. This approach reduces network congestion and speeds up response times. Devices can evaluate data locally and send only the most important insights to central systems for deeper analysis.

As connected infrastructure expands, integration with smart cities and autonomous systems becomes more likely. Traffic management, environmental monitoring, and public utilities could all share data streams to improve urban planning. When multiple systems exchange information, cities gain a comprehensive understanding of operations that supports better resource management.

Telemetryczny Technology and the Future of Smart Monitoring

The growing importance of connected infrastructure means telemetryczny technology will continue shaping how organizations monitor and manage complex systems. By enabling continuous data collection from remote environments, these systems transform raw measurements into actionable insights. Engineers gain visibility into operations that once remained hidden until problems appeared.

Organizations that invest in modern monitoring solutions often discover improvements beyond simple maintenance. Data insights reveal inefficiencies, operational bottlenecks, and opportunities for optimization. Over time, the accumulated information becomes a valuable strategic resource that guides better planning, infrastructure design, and resource allocation.

As industries move toward automation and intelligent systems, the role of telemetryczny monitoring will only expand. Sensors, communication networks, and advanced analytics will continue working together to create environments where machines communicate their status constantly. That steady flow of information helps businesses operate more safely, efficiently, and intelligently in an increasingly connected world.

Conclusion

In today’s connected world, telemetryczny technology is no longer a luxury but a necessity for industries and organizations aiming to optimize operations. By continuously collecting and analyzing remote data, it allows teams to anticipate problems, enhance efficiency, and make smarter decisions. From industrial equipment to environmental monitoring, the insights gained reduce downtime, improve safety, and unlock strategic advantages that manual methods simply cannot achieve.

Investing in robust telemetry systems also prepares organizations for future technological growth. As AI, edge computing, and smart infrastructure evolve, these systems provide the backbone for predictive management and real-time decision making. The ability to turn raw measurements into actionable intelligence is what sets modern operations apart, giving organizations a clear edge in competitiveness and reliability.

Ultimately, telemetryczny monitoring represents a shift from reactive problem-solving to proactive optimization. The combination of real-time visibility, predictive analytics, and secure data transmission ensures that systems are safer, more efficient, and smarter than ever. Organizations embracing this technology are not only improving current operations but also positioning themselves for a future where connected systems define success.

Read More: Legendbio.co.uk

Tech

Rental vs. Repair: The Carbon Footprint of Maintaining an old Chiller on Life Support

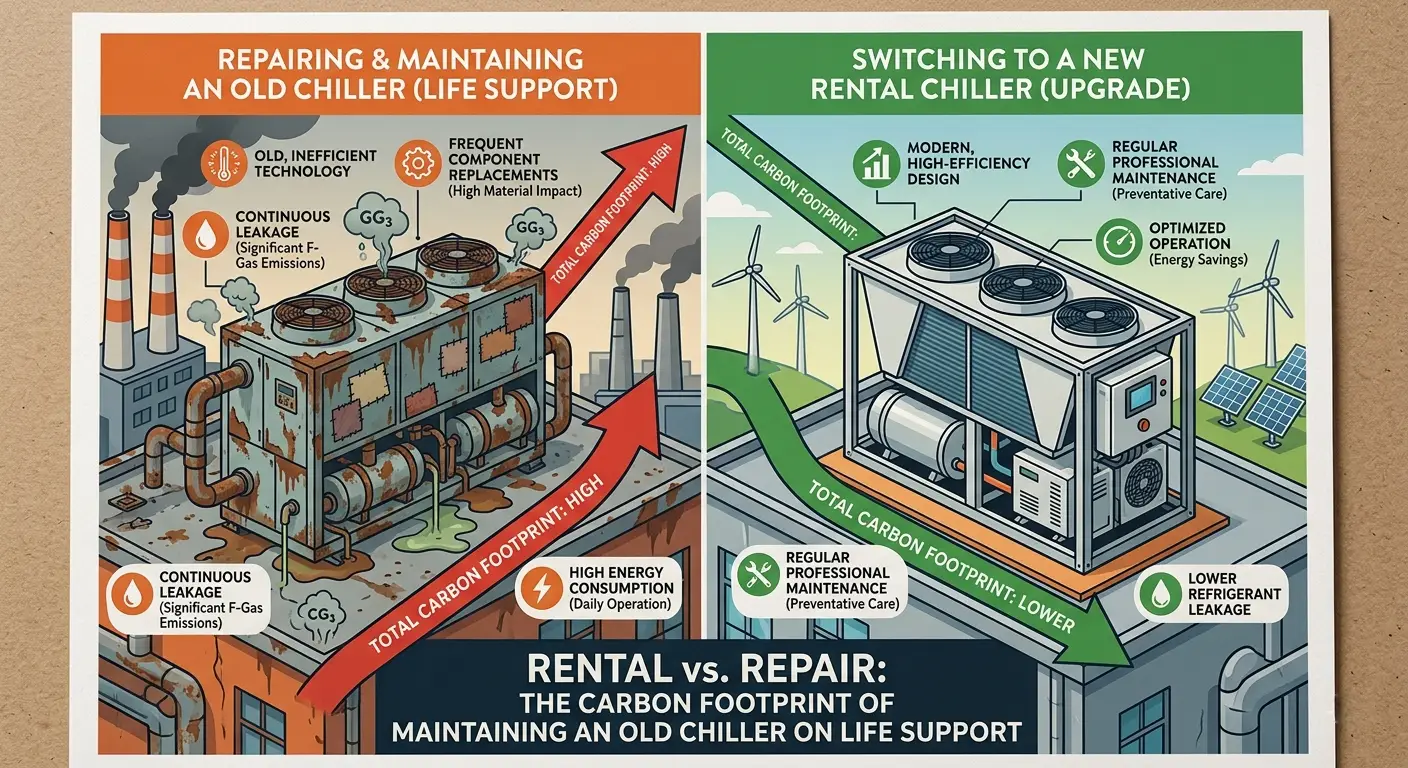

The image of a broken-down cooling unit puffing its way during a humid summer is not a new sight to many Australian facility managers. Although the temptation is to patch and mend, the environmental expense of keeping an old system alive is becoming too hard to overlook.

With the increased cost of energy and stricter carbon reporting, chiller hire has ceased to be a short-term solution to decarbonisation to be one of the main approaches to decarbonisation.

The Unseen Environmental Cost of Old Systems

Old chillers are frequently ‘energy hogs’. A unit that had been installed fifteen years ago does not have the variable speed drives and advanced technology of a compressor as the current chiller rentals. Here in the face of extreme climate in Australia, an inefficient chiller will not only raise the cost of operation, but it will also also raise drastically the carbon footprint of a building with the chiller sometimes to as high as 40 percent of total energy usage.

Refrigerant Leaks and GWP

In addition to energy efficiency, old units usually use older refrigerants, which have a high Global Warming Potential (GWP). Leaks of any kind, even minor ones, can be disastrous to the environment. The current rental fleets are equipped with low-GWP alternatives and are subject to stringent maintenance, which means that your cooling solution will not be contrary to the current ESG goals.

Modern Chiller Hire has Strategic Advantages

Businesses can avoid the repair trap by choosing a high-efficiency rental unit. Managers can install the most up-to-date technology in real time, as opposed to investing capital into a system that will never become modern.

Operational Efficiency and NABERS Ratings

Performance building measurement in Australia is strictly through the NABERS ratings. These scores can be given a huge improvement through a modern hire unit. The current chiller rentals systems have an inbuilt smart monitoring system, which can be adjusted to real time, keeping the system taking only needed power and this would significantly reduce the emission of greenhouse gases.

The ‘Bridge to Permanent’ Solution

The rental of chillers offers the breathing room to develop an effective permanent replacement that is really sustainable. It avoids panic-buying some undersized or inefficient unit to keep the lights on, and it is a long-term environmental objective.

Summary

The repair or replacement decision is no longer a financial choice, but a climate choice. Through chiller hire, Australian businesses will be able to immediately minimize their carbon footprint, enhance energy efficiency and switch to a more sustainable model of operation without having to incur the heavy costs of capital expenditure. Legacy systems are turned into a liability when more modern rental solutions provide a way to go green with cooling.

Tech

The Delegation Gap: Why Managers Struggle to Let Go and What Actually Fixes It

Delegation fails for a reason that managers rarely name out loud. They are not holding on to work because they enjoy the control or because they do not trust their team. They are holding on because letting go feels riskier than it should. The task they delegate disappears into a system where they cannot see its progress, cannot verify the approach being taken, and will not find out whether something went wrong until it is too late to course-correct without a significantly larger intervention than would have been needed earlier.

The rational response to that uncertainty is to stay involved, to check in frequently, and to hold on to the highest-stakes tasks entirely. The result is a manager who is perpetually overloaded with work that their team is capable of doing, and a team that is perpetually underutilized because their manager’s anxiety about the handoff is greater than their confidence in the infrastructure that would make the handoff safe. Delegation does not fail because of trust. It fails because the infrastructure that should make trust rational is missing. The fix is project management tools that make task progress visible, decisions traceable, and commitments trackable without requiring the manager to be involved in every step to maintain confidence that the work is on course.

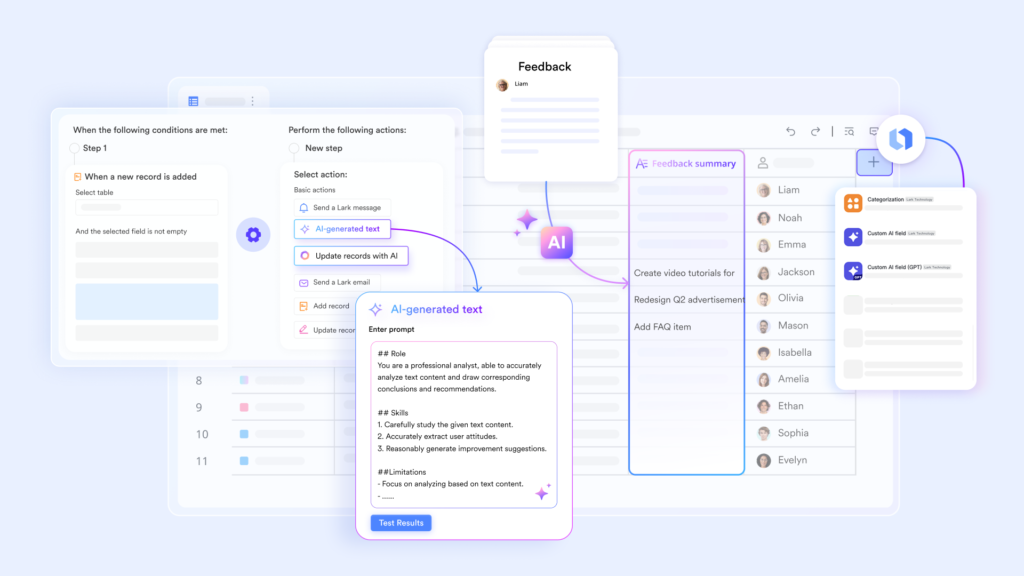

Task ownership that is visible without a check-in with Lark Base

The check-in is a symptom of invisible work. When a manager delegates a task and then cannot see any evidence of its progress, the only way to maintain awareness of where things stand is to ask. The asking generates a message, which generates a response, which generates a follow-up, and the check-in cycle that was supposed to be a delegation relationship becomes a low-frequency version of the micromanagement the delegation was meant to replace. The manager gets partial reassurance. The team member gets the implicit message that their work is being monitored rather than trusted. Neither party achieves what delegation was supposed to create.

Lark Base makes task progress visible to the delegating manager without requiring any active communication from the team member. “People fields” name the current owner of every task at the record level, so ownership is a structural property of the task rather than an informal agreement that exists only in two people’s memories. Dropdown status fields update in a single action, so the team member who completes a milestone changes the record’s status and the manager’s dashboard reflects the change automatically without a message being composed or sent. Automated notifications alert the manager when a task reaches a new stage, when a deadline is approaching without the status having advanced, and when a record has been flagged as blocked, so the manager receives targeted operational signals rather than waiting for a scheduled check-in to discover where the work actually stands.

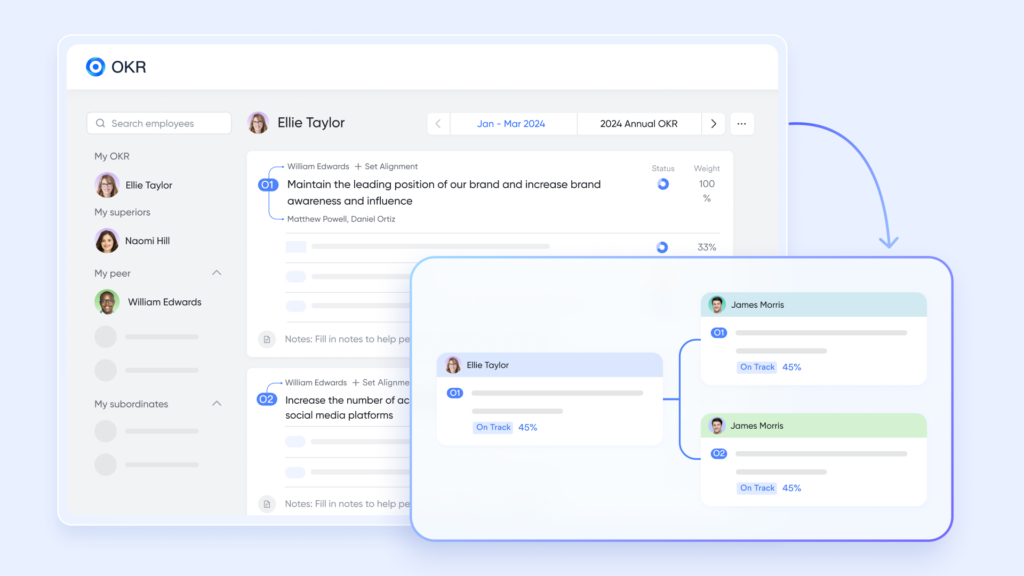

Strategic alignment the team member carries themselves with Lark OKR

A delegated task that the team member does not understand in its strategic context will be executed in ways the manager would not have chosen, not because the team member is unskilled but because they are making judgment calls without the full picture. Every judgment call they make in the absence of strategic context is a potential deviation from the manager’s intent, and the manager who anticipates this will tend to over-specify the task rather than delegate it genuinely, which is a sophisticated form of the same problem.

Lark OKR removes the strategic context gap by making every team member’s understanding of organizational priorities a permanent, self-serve resource rather than something transmitted exclusively through manager communication. When a team member can see how their delegated task connects to the team’s key results and those key results connect to the company’s objectives, they can make judgment calls that the manager would have made without requiring the manager to brief them on the strategic landscape before every significant decision. Individual key results that connect personal work to team objectives give team members the orientation they need to self-correct when an unexpected decision point arises, so delegation produces genuinely autonomous execution rather than constrained task completion.

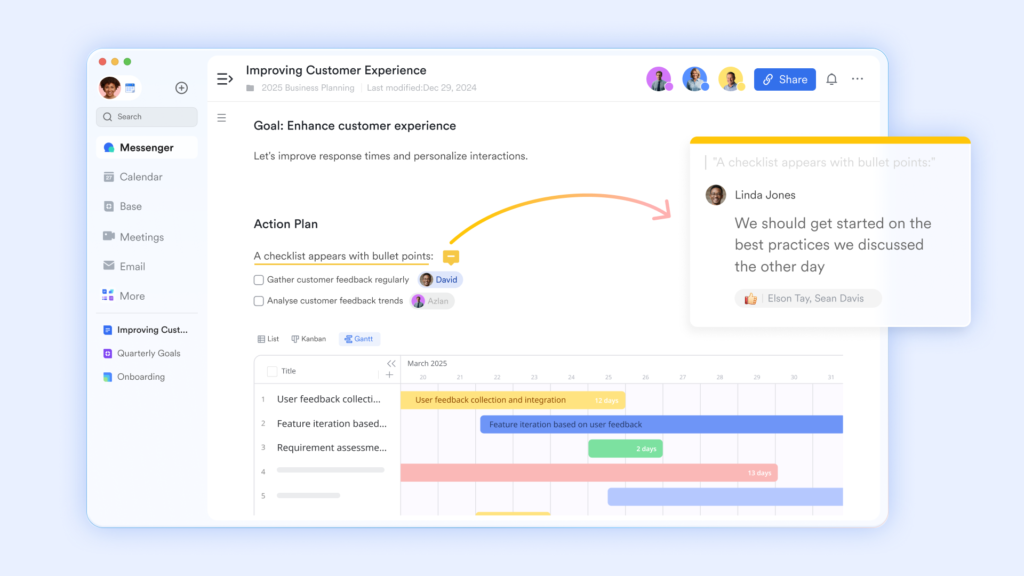

A decision record that does not require verbal reporting with Lark Docs

The verbal report is the manager’s substitute for a documentation infrastructure. Because the work is not documented, the only way to know what decisions are being made and why is to ask. The team member describes their approach. The manager approves or redirects. The decision exists in both parties’ memories until one of them forgets it, and the next time a similar decision arises, the same conversation has to happen again from the beginning. The verbal reporting cycle is not just inefficient. It is the mechanism by which delegation remains dependent on the manager’s availability at every decision point rather than becoming genuinely self-sustaining.

Lark Docs replaces the verbal report with a living decision record that the team member maintains as a natural part of doing the work. “Version History” logs every change to the working document with the editor’s name and timestamp, so the manager who wants to understand the current approach can read the document’s edit history rather than requesting a verbal briefing. “@mention” allows the team member to flag specific decisions for the manager’s awareness directly within the document without requiring a separate message, so the manager receives targeted visibility into the choices that genuinely warrant their attention rather than a comprehensive verbal report that covers both important and routine matters. Over time, the document record builds a pattern of how the team member thinks and decides that gives the manager increasing confidence to delegate further rather than maintaining a narrow scope of delegated work indefinitely.

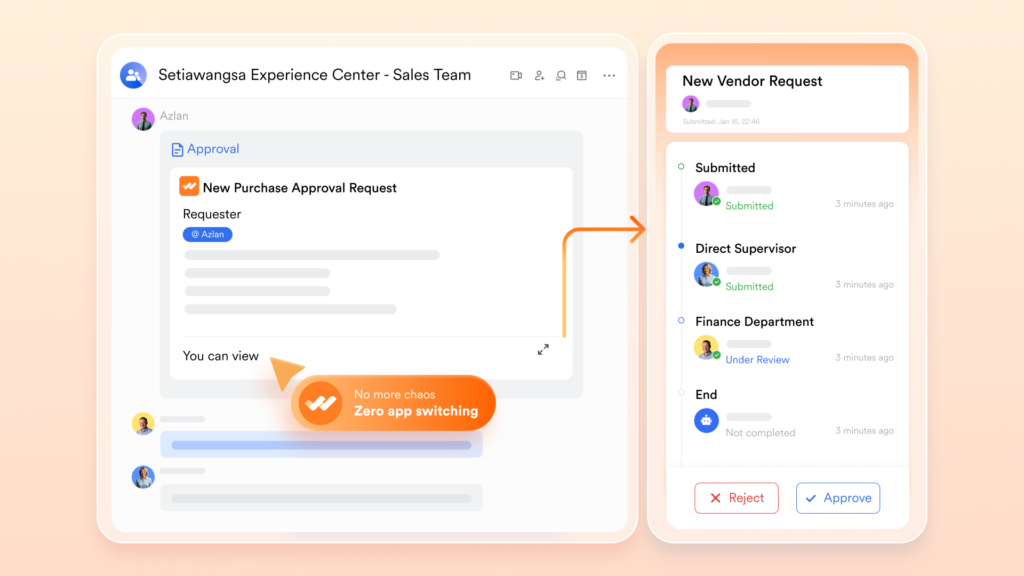

Smart routing that replaces guesswork with Lark Approval

One of the most common delegation failures is the one that happens at the boundary of a team member’s authority. They encounter a decision that they believe may exceed what they have been delegated to decide, but they are uncertain whether it does, and the cost of escalating unnecessarily feels higher than the cost of making a judgment call. They make the judgment call. The manager later discovers that a decision was made that should have been escalated, and the confidence they had been building in the team member’s judgment takes a step backward.

Lark Approval removes the guesswork from escalation by building the escalation threshold directly into the approval workflow. “Conditional Branches” define exactly which characteristics of a request, such as its budget value, its client tier, its risk category, or the scope of commitment it creates, determine whether it falls within the team member’s delegated authority or requires a higher-level sign-off. The team member who encounters a decision point submits it through the approval system and the routing logic makes the determination automatically, so the right authority reviews the right decisions without anyone having to interpret the boundary of their own delegation in real time. The manager gains confidence that significant decisions will surface appropriately without their direct involvement, which is the precise condition under which genuine delegation becomes sustainable rather than anxiety-inducing.

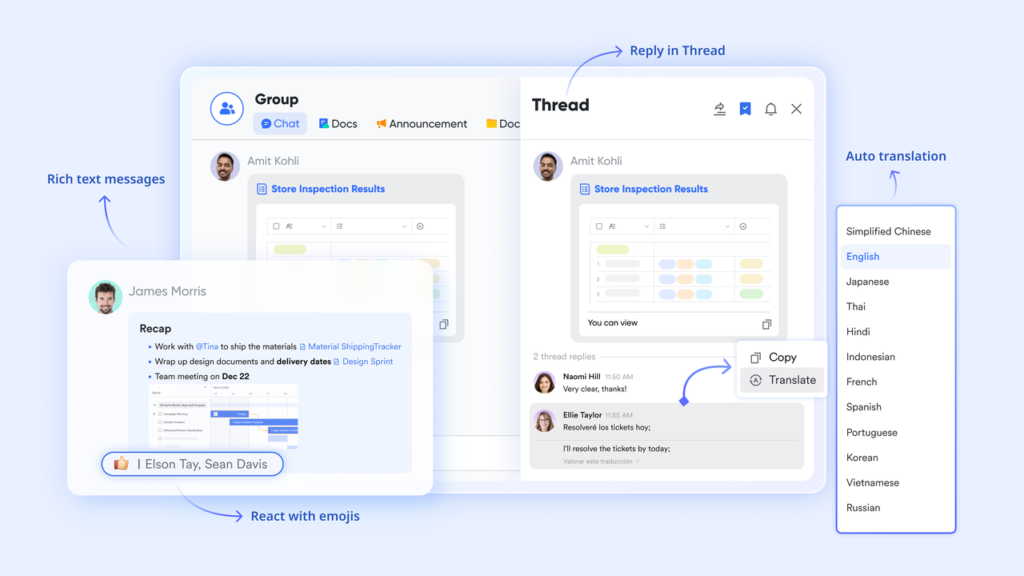

Presence without the pressure with Lark Messenger

The manager who delegates work but then messages the team member every few hours to ask how it is going has not delegated. They have redistributed the execution while retaining the management overhead in a slightly different form. Genuine delegation requires communication patterns that give the manager confidence without creating the expectation of constant availability from the team member, and communication tools that default to immediacy make that balance structurally difficult to achieve.

Lark Messenger’s “Scheduled Messages” allow managers to establish a predictable communication rhythm with delegated team members without requiring either party to be available for real-time exchange at any given moment. The manager composes a check-in or a piece of encouragement when it is convenient and schedules it to arrive at the team member’s most useful moment. “Read/Unread Status” gives the manager confirmation that important communications have been received without requiring the team member to respond immediately, so the awareness of contact is established without an implicit response obligation that interrupts focused work. “Chat Tabs & Threads” allow the team member to maintain a thread of updates on delegated work within the project group that the manager can review when they choose rather than in real time, so the information flow is continuous without the communication exchange being constant.

Bonus: Why delegation training does not solve the delegation problem

Organizations that recognize their managers are holding on to too much work typically respond with training: workshops on delegation skills, coaching on how to give clear briefs, and frameworks for identifying which tasks are safe to hand off. These interventions address the behavioral dimension of a problem whose root cause is structural.

The manager who has been trained to delegate better but still cannot see their team member’s task progress, still receives decisions only through verbal reports, and still has no reliable escalation mechanism will revert to their old behaviors within weeks of the training ending, because the underlying uncertainty that drove those behaviors has not been resolved. Tools like Asana and monday.com improve task visibility. Confluence and Notion improve documentation. But none addresses the full delegation chain from task tracking to strategic alignment to decision records to escalation logic to communication patterns. Looking at Google Workspace pricing and these specialist tools alongside each other reveals a system where the five conditions for safe delegation are split across five different products. Lark puts all five in one environment, so the infrastructure that makes delegation rational is available to every manager without requiring them to assemble it from parts.

Conclusion

The delegation gap closes when the infrastructure makes letting go feel safe. When task progress is visible without a check-in, strategic context is self-serve, decisions are documented without a verbal report, escalation is automatic rather than judgment-dependent, and communication maintains awareness without demanding constant exchange, the manager’s anxiety about delegation resolves not through a change in their personality but through a change in what the system shows them. A connected set of productivity tools that makes delegation structurally safe is how organizations unlock the capacity of their managers and the potential of the teams that have been waiting for the opportunity to use it.

Tech

How Creators Are Actually Making Money With AI Video in 2026

AI video is no longer just a fun tool for making clips. In 2026, it has become part of how creators build real income. What changed is not just video quality. What changed is the economics.

AI video lowers production cost. It cuts turnaround time. It makes content testing cheaper. That means creators can publish more, try more formats, and find what works faster.

That does not mean AI video prints money by itself. It does not. A weak idea is still weak. A bad offer still will not convert. And low-trust content still performs badly.

But if a creator already understands audience, messaging, and distribution, AI video can make the entire system more efficient.

That is where the money comes from.

In this article, I want to break down how creators are making money with AI video in 2026, which monetization models are working, and why workflow matters more than most people think.

Why AI video matters for creator monetization in 2026

The biggest reason AI video matters is simple: it changes the cost of making content.

A few years ago, if I wanted to test five video ideas, I usually had to pick one and hope it worked. The other four ideas stayed in my notes because filming, editing, and revising took too much time.

Now I can test more angles with less effort, and that changes creator monetization in three important ways.

Lower production cost means higher margin

If content costs less to make, more revenue stays with the creator.

This matters whether the creator makes money from ads, affiliate links, sponsorships, or digital products. Lower production cost improves the margin on every monetization model.

Faster output means more chances to find winners

Most creator income does not come from random luck. It comes from repeated testing.

You test a different hook, a different product angle, a different storytelling format, or even a different call to action.

AI video makes that testing cycle much faster.

More variations improve monetization odds

A creator who publishes one polished video might still lose to a creator who publishes five strong variations and learns faster.

That is why AI video matters. It does not replace skill. It increases speed and volume around a monetization strategy.

The five main ways creators are making money with AI video

There are many ways to monetize content, but most AI video income today falls into five groups:

- Ad revenue

- Affiliate marketing

- Sponsorships and brand deals

- Digital products

- Client work and services

Each one benefits from AI video in a different way.

1. Ad revenue from YouTube and short-form platforms

This is still the most familiar model.

Creators publish videos, grow an audience, and monetize views through platform payouts. AI video helps here because it makes consistent publishing easier.

That matters because ad revenue depends on scale. One video rarely changes everything. What matters is upload frequency, retention, topic fit, audience growth over time.

Why AI video helps ad revenue

It helps creators publish more often, with more visual variety, and with lower production friction.

That is useful for:

- faceless YouTube channels

- educational content

- niche explainers

- short-form storytelling

- list-based content

AI video does not automatically improve watch time. But it lets creators test more formats that might improve watch time.

The real advantage is consistency.

A creator who can produce three solid videos a week instead of one weakly edited video every two weeks has a much better chance of building monetizable traffic.

2. Affiliate marketing with AI video

This is one of the strongest monetization models right now.

Affiliate marketing works especially well with AI video because video is good at showing products, comparing options, and guiding people toward a click.

I think this is where a lot of creators underestimate AI. They focus on “viral clips” when the better use case is often commercial content.

Why affiliate works so well with AI video

Affiliate content usually needs:

- fast product demos

- clear explanations

- strong visuals

- frequent creative refreshes

AI video lowers the cost of producing all of that.

A creator can make product roundups, comparison videos, short-form reviews, how-to clips and top tools lists, all without setting up a full production workflow every time.

Where the money comes from

The affiliate model works when video content does one of these things:

- solves a problem

- shows a product in action

- compares alternatives

- gives a clear recommendation

That is why AI video affiliate content often works best in niches like:

- software

- creator tools

- productivity

- e-commerce tools

- online business

- education

Why more variations improve affiliate revenue

Affiliate income improves when creators test:

- different openings

- different recommendation angles

- different product positioning

- different visual styles

A static blog post gives one chance. AI video gives many.

That makes affiliate marketing one of the most practical AI video monetization models in 2026.

3. Sponsorships and branded content

Brands do not just want to reach anymore. They want output.

They want creators who can:

- move fast

- test concepts

- adapt messaging

- deliver multiple variations

That is why AI video is becoming useful for sponsorships.

How creators use AI video for brand work

Creators use AI video to:

- mock up campaign ideas before pitching

- create sponsor-friendly visual concepts

- produce UGC-style content faster

- localize branded content

- turn one campaign idea into multiple deliverables

That gives creators a strong advantage, especially if they work with smaller brands that do not have large internal production teams.

Why brands still care about trust

This part matters. AI video helps with speed, but sponsorship revenue still depends on trust. If the content feels generic, lazy, or off-brand, it will not perform.

So the winning approach is not “replace yourself with AI.”

The winning approach is “use AI to produce better sponsor content with less friction.”

That means clear messaging, audience fit, strong review process, brand-safe output.

The creator still matters. AI just makes the production side lighter.

4. Digital products and courses

This is the highest-margin model for many creators.

Instead of depending only on ads or brand deals, creators use content to sell courses, guides, templates, prompt packs, playbooks, and memberships.

AI video supports this model in two ways.

First, it helps sell the product

Creators can use AI video for:

- sales page explainers

- launch videos

- social promo clips

- course previews

- feature walkthroughs

That shortens the time between building a product and marketing it.

Second, it helps package the knowledge

A creator who teaches something can use AI video to turn:

- slides into explainers

- written lessons into visual summaries

- course updates into short announcements

That makes educational content easier to maintain.

Why digital products fit AI video well

This model works because the margin is high.

If AI helps reduce content production cost while the product price stays the same, profit increases.

That is one reason I see more creators moving toward AI-assisted product funnels rather than relying only on ad revenue.

5. Client work and creator services

Not every creator wants to become a media brand. Some want to monetize their skill directly.

AI video generators make that easier too.

A creator can offer short-form content packages, ad creatives, founder video systems, product demo videos, landing page explainer content, to startups, small businesses, and online brands.

Why this works

Most clients do not care whether a creator used a camera or AI. They care about speed, quality, clarity, and conversion potential.

If a creator can produce useful assets fast, that is valuable.

This model is often overlooked, but it can be one of the fastest ways to monetize AI video, especially for creators who already understand messaging and marketing.

Why affiliate marketing is one of the strongest AI video models

If I had to pick one model that fits AI video especially well, it would be affiliate.

That is because affiliate content benefits from three things AI video improves:

1. Speed of production

Affiliate opportunities move fast. New tools launch, features change, and creators need content quickly.

2. Volume of testing

Different product angles convert differently. AI video makes it easier to test:

- demo-first videos

- listicle videos

- review-style clips

- comparison videos

3. Lower cost per asset

A creator can make more monetizable content without spending thousands on production.

This is also where workflow platforms matter. If the creator is stacking too many disconnected tools, the speed advantage disappears.

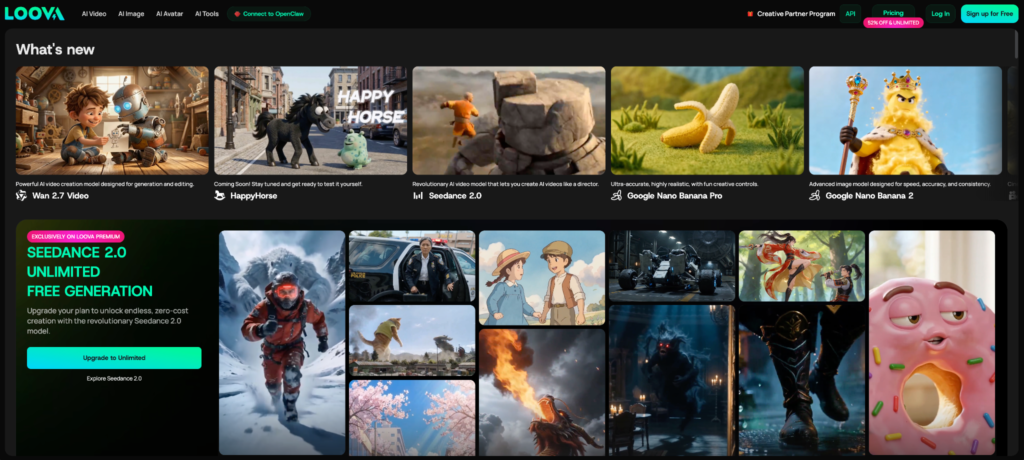

That is one reason creators increasingly use platforms like Loova for AI video workflows. When generation, editing, and iteration happen in one place, affiliate content gets easier to produce at scale.

How AI video improves YouTube and platform ad revenue

A lot of people assume more videos automatically means more money. That is not true.

The platform still rewards retention, clarity, topic alignment, and consistency. AI helps with the consistency part. It can also help with format testing.

For example, a creator can test:

- story-first intros

- faster visual pacing

- different background styles

- different narrative structures

That helps improve watch behavior over time.

Retention still matters more than volume

I want to be clear here.

Publishing ten weak videos will not outperform publishing fewer strong ones forever. AI video helps when it improves the output system, not when it floods platforms with low-value content.

That is why the best creators use AI to improve efficiency, increase testing, and support storytelling, instead of dumping meaningless content.

The AI video workflow behind successful monetization

This is the part many articles miss. Monetization does not depend only on content. It depends on workflow.

A creator who monetizes well usually has a repeatable system:

- Find a topic or offer

- Turn it into one or more repeatable video formats

- Publish consistently

- Track clicks, views, or conversions

- Improve what works

AI video helps when it fits into that system.

Formats matter more than random inspiration

The strongest creators are not asking, “What should I make today?”

They are asking:

- which format performs best

- which topic converts

- which creative angle deserves another variation

That is why repeatable content structures matter so much.

All-in-one platforms reduce workflow drag

Disconnected tools slow everything down.

One tool for image generation. Another for video. Another for editing. Another for voice. Another for export.

That stack becomes expensive and mentally heavy.

A unified platform reduces that drag. That is where Loova fits well for many creators. It helps keep content production, generation, and iteration inside one workflow instead of across five separate dashboards.

That matters more than most people realize.

What types of creators benefit most

Not every creator benefits in the same way. But some groups clearly gain more from AI video monetization.

YouTubers and storytellers

They benefit from faster visual production and more content experiments.

Short-form creators

They benefit from speed, variation, and trend adaptation.

Affiliate marketers

They benefit from more demos, comparisons, and creative refreshes.

Educators and solo founders

They benefit from explainers, course promos, and clear product content.

Small media teams

They benefit because AI lowers production cost without requiring a bigger headcount.

Common mistakes creators make when trying to monetize AI video

There are a few traps I see often.

Publishing low-value content at high volume

Volume is useful only when the content is still helpful or compelling.

Using AI visuals without a monetization path

A cool video is not a business model. The creator still needs a funnel, an offer, a trusted recommendation, and a clear CTA.

Ignoring audience trust

AI can help produce content faster, but it cannot fake trust. If the creator pushes irrelevant offers or low-quality products, monetization drops.

Using too many disconnected tools

This is a big one. Complex stacks reduce speed and increase burnout.

Chasing virality instead of building systems

One viral clip is exciting. A repeatable monetization format is worth much more.

How I would start monetizing AI video in a practical way

If someone asked me where to start, I would keep it simple.

Step 1: Pick one monetization model

Do not try to do ads, affiliate, brand deals, and product sales all at once. Choose one.

Step 2: Pick one repeatable content format

For example:

- tool comparisons

- product demo shorts

- story-based explainers

- niche educational clips

Step 3: Build a small prompt and content library

Save:

- successful prompts

- winning hooks

- proven structure

- best-performing CTA formats

Step 4: Track the right metrics

If the model is affiliate, track:

- clicks

- CTR

- conversion rate

If the model is ad revenue, track:

- retention

- watch time

- RPM trends

Step 5: Improve the system before scaling

The goal is not maximum output on day one. The goal is a repeatable workflow that improves over time.

The future of creator monetization with AI video

AI lowers the barrier to entry. That is good and bad.

It means more creators can produce useful content faster. It also means competition increases. That is why the future advantage will not come from access to AI alone.

It will come from better strategy, stronger trust, clearer offers, faster workflows, and better format testing.

In other words, AI makes execution easier, but it also makes lazy content easier. The winners will be the creators who use AI inside strong systems.

Final thoughts

Creators are making money with AI video in 2026, but not because AI is magic.

They are making money because AI changes the economics of content: lower production cost, faster publishing, more testing, and better workflow efficiency. That helps creators monetize through ads, affiliate marketing, sponsorships, digital products, and client services.

If I had to sum it up simply, I would say this:

AI video does not create income by itself. It creates leverage.

And creators who build repeatable systems around that leverage are the ones making real money.

If I were starting today, I would not chase every trend. I would choose one monetization path, one repeatable format, and one workflow platform that keeps production simple. For a lot of creators, that means using a unified system like Loova to reduce friction and produce more monetizable content without building a messy tool stack.

That is where the real advantage starts.

Frequently Asked Questions

Can creators really make money with AI video?

Yes. Creators are already using AI video to support ad revenue, affiliate marketing, sponsorships, digital products, and client work. The income comes from the business model, not the AI alone.

What is the best way to monetize AI-generated videos?

It depends on the creator, but affiliate marketing, ad revenue, and digital products are some of the strongest models because they benefit directly from faster content production.

Is affiliate marketing good for AI video creators?

Yes. It is one of the best fits because AI video helps creators produce more demos, comparisons, and product-focused content quickly.

Can AI videos get monetized on YouTube?

Yes, if they follow platform rules and provide real value. Monetization still depends on audience retention, originality, and policy compliance.

What are the best AI video tools for creators in 2026?

The best tools depend on workflow needs, but creators increasingly prefer platforms that combine video generation, editing, and creative variation in one place.

How do beginners start making money with AI video?

The easiest path is to pick one format and one monetization model first. For many beginners, that means short product videos for affiliate content or simple educational videos tied to digital products.

Do brands pay for AI-generated content?

Yes, but they still care about quality, fit, and trust. AI helps speed up production, but the creator still needs to deliver strong brand-aligned content.

Is AI video a real side hustle or just hype?

It can be a real side hustle if the creator uses it to support a clear monetization model. Without strategy, it stays hype. With a system, it can become a useful income tool.

-

Digital Marketing2 months ago

Digital Marketing2 months agoSimpcitt: Exploring the Rise of Digital Fan Culture

-

Digital Marketing2 months ago

Digital Marketing2 months agoAdsy.pw/hb3: Understanding Short URL Trends & Online Safety

-

Health2 months ago

Health2 months agoXT Labs Steroids: Reviews, Quality & Where to Buy

-

Tech2 months ago

Tech2 months agoTrucofax: The Digital Hub Reinventing Smart Information Access

-

Tech2 months ago

Tech2 months agoStormuring: Understanding the Rising Trend and Its Impact

-

Tech2 months ago

Tech2 months agoHHKTHK Explained: Meaning, Use, and Digital Context

-

Food & Drinks2 months ago

Food & Drinks2 months agoMannacote: Understanding the Digital Term Shaping Modern Trends

-

Entertainment2 months ago

Entertainment2 months agokracensoft.com: A Practical Look at a Growing Digital Platform